Web developers can perform a vital role in the success of any SEO campaign. It’s important that developers have some understanding of Search Engine Optimisation (SEO) and how it plays a role in ranking a clients website. This article is aimed at familiarising the concepts of SEO for Developers to help them understand why getting everything right matters to their clients’ rankings.

Before we begin, let’s make two assumptions. The SEO campaign is :

- For a business website that’s recently been built

- Developed on one of the 4 popular Content Management Systems (CMS):

- WordPress

- Shopify

- Squarespace

- Wix

On these assumptions you are required to develop the website to incorporate some specific requirements from a SEO specialist. There are many other CMSs on the market all with their own merits, but these are by far the most popular ones that we, as an agency, work on. So now that we have the shiny new site ready to go, this is why the SEO specialist will ask you to incorporate those specific requirements.

This SEO for Developers article is an exercise in defining some of the more technical SEO processes to help Web Developers understand the importance of their central role in the success of any SEO campaign that they’re involved in.

Tracking

We must track the progress of the SEO campaigns we put together from the beginning since it’s important to gauge the effectiveness of the SEO campaign.

It is equally important to gauge the ineffectiveness since a failing campaign needs to be fixed. To make that fix, we need to know what’s broken. Then we need to know how to fix it and by whom.

As with the CMS, there are many options involved with tracking. These are the ones we use:

GOOGLE TRACKING TOOLS

The first set of tracking codes we install are (not surprisingly) from Google. The 3 main tools we use are:

- Google Analytics (GA),

- Google Search Console (GSC), and

- Google Tag Manager (GTM)

These tools offer a vast amount of data, among other important metrics, allowing us almost a complete overview of:

- how the website is being found

- who by

- where they came from

- where they left

- who saw what

- how long they looked at it for, and

- how many people took the effort to contact you.

THIRD PARTY TRACKING TOOLS

Google provides an outstanding set of tools to track user engagement. We’ve added to this to enable us to deliver better results. Over the past 20 years, we have shortlisted 4 of the best tools to help us monitor the hundreds of SEO campaigns we’ve worked on. These are:

- MOZ

- SEM Rush

- Ahrefs

- AWR Cloud

These tools are used throughout our clients SEO campaigns, including:

- initial keyword research

- competitor and local SEO analysis

- tracking keywords rankings, and

- Reporting

Mobile-First Development

Mobile-first indexing for websites has been with us for a few years, Google started to implement their mobile-first approach in late 2016. As of September 2020, search engines index websites for mobile over desktop. A development team needs to ensure that there is a consistent user experience across all devices from mobile, tablet to desktop.

Developing for mobiles-first is a better approach as it requires web development teams to really focus on what’s important to the user and what information they need to see first. Focusing on getting these correct will improve the user experience as you’ve clearly defined the user experience and met their needs.

Hypertext Markup Language (HTML) & Cascading Style Sheets (CSS)

As a developer, we can safely assume that you will be familiar with the most popular programming languages used to develop web sites such Hypertext Preprocessor (PHP) and JavaScript. These two languages provide the functionality and support interaction that all websites must have.

However, as you may have heard “content is king”. This could be updated to read “written content is king”. The layout and presentation is designed using a combination of HTML and Cascading Style Sheets (CSS).

WHY HTML IS IMPORTANT TO SEO

Aside from the obvious need for people to access and read a website’s content, HTML code matters to SEO because HTML tags inform search engines and screen readers about the semantic structure of the web page. Well written code is as important as well written copy when it comes to ranking pages as Google will target pages that deliver on both of these points.

WHY CSS IS IMPORTANT TO SEO

The main SEO benefit of using CSS is that page speed light and fast-loading web pages are prioritised by search engines. Search engines look at the code on a page, as the page loads, as opposed to what is written for the user. Since the most important content is usually located at the top of the page search engines crawl this first, so a well written CSS will be crawled quicker. In short, load times matter.

Structured Data & Schema.org

We use structured data in a few ways. It is basically a standardised format for providing information about a web page and classifying the content on the page. For example, on an online store we would have placed product schemas on a product page to describe some of the the product attributes, such as:

- its name

- location of the products image

- stock-keeping unit (sku)

- an aggregate review ratings from 1 to 5

- price, and

- the currency it’s being sold in.

We always use structured data to describe our clients services, as well as a local business or as an organisation. Structured data does matter to an SEO campaign. We’ve found it to be an effective tactic to pass important information on a web page to Google and other search engines.

Another benefit is that the search engine is then able to provide additional features on the Search Engine Results Pages (SERPs)that will further enhance the visibility of your content. These features are referred to as featured snippets. This is what they look for:

Make Google Work Easier With A Robots.txt File

A robots.txt file provides search engine crawlers and bots with data on which pages or files they can or can’t request from your site.

This can also be used to avoid overloading your site with page and file requests. It should not be a mechanism for keeping a web page out of Google. Robots.txt files need to be seen as sending requests to search engine crawlers and bots as to which pages and files to catalogue.

Search engines can be programmed to crawl a website regardless of what’s written in a robots.txt file.

That aside, robots.txt files help crawlers and bots index a website and should be installed as part of any website build, as a standard feature. Google invests lots of time and money crawling and indexing the internet so a user’s keyword search results net them the best results. Anything that you can do to reduce that overhead will be rewarded.

Sitemaps.xml Files Make Googles Work Even Easier

In simple terms, an XML sitemap is a list of your website’s URLs. It acts as a roadmap to tell search engine crawlers and bots what content is available for indexing and how to get to it. This is why they should also be installed on a website as part of any website development as a standard feature. These types of file can be created in two ways

- A CMS plugin – The most popular CMS in the world is WordPress. The most popular sitemaps.xml plugin is developed by Yoast. With this option you just install the plugin and it will generate/ install the sitemap XML files on the web server periodically.

- The alternative is either via online xml sitemap generator, or a third party application such as Screaming Frog. If you use this option then you will need to install the xml files web server yourself, in the root directory of your web server.

It’s worth noting that you can create more than one xml file. This allows larger websites to separate out different types of pages & media, such as:

- website pages

- blog posts

- images, and

- products

In turn the xml files themselves can give extra bits of data to the search engine bots, as you can see from the example below:

<?xml version=”1.0” encoding=”UTF-8”>

<urlset xmlns=”http://www.sitemaps.org/schemas/sitemap/0.9” xmlns:xhtml=”http:www.w3.org/1999/xhtml”>

<url>

<loc>https://www.example.com</loc>

<lastmod>2017-10-06</lastmod>

<changefreq>weekly</changefreq>

<priority>0.9</priority>

<xhtml:link rel=”alternate” hreflang=”en” href=”https://www.example.com”/>

<xhtml:link rel=”alternate” hreflang=”fr” href=”https://www.example.com/fr”/>

</url>

Well formatted sitemap.xml files are worth investing time in getting into the right format for your website.

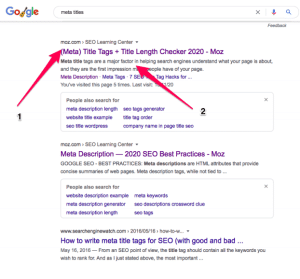

Metadata Is What Many Users See First

Metadata is simply “data that provides information about other data”. In the context of SEO, it is “data about your web page”. The two most important meta data tags in SEO are:

- Meta Titles (sometimes called the title tag), and

- Meta Descriptions. This is what users see listed in SERPs.

This is one of the reasons why people click to go to your website above someone else’s website option. Consider metadata as adverts for your web pages and similar to Google Ads in that sense.

META TITLE

As the name implies, the title tag is an HTML element that specifies the title of a web page. Title tags are displayed on SERPs as the clickable headline links.

META DESCRIPTION

The meta description is an HTML attribute that should provide a brief summary of a web page, ideally in 155 characters or less.